I attended this workshop, held as part of KDD 2021 organised by Aussie’s Chang Xu (U.Syd), Siqi Ma (UQ) and David Lo (SMU). Gave my introductory talk (PDF), which was putting deep learning into context and explaining the background to language models and how they could be used. Then Nan Duan (Microsoft Research Asia) told everyone how his 20 person international team had been doing just that for several years. Their work is well advertised on the usual places (ZDNet, The Verge, etc.) and is absolutely amazing stuff. This will revolutionise coding the way deep language models have revolutionised NLP.

Archive for the ‘talks’ Category

Machine Learning Research Tutorials

March 8, 2020Machine learning has become one of the hottest areas in computer science and technology. Both industry and academia have gone gaga. Big tech companies send 100’s each to the top research conferences and the conference numbers are increasing in size so they are now beyond capacity. But, how do you learn about machine learning in the first place? Assuming you have a strong STEM undergraduate degree and are research savvy, this page points to some appropriate resources for research. These are intended for starting PhD students.

If you are more interested in learning the basics as a potential user, then you will need to find different resources such as the blogs up on https://medium.com/search?q=machinelearning&ref=opensearch or at the MOOCS such as Coursera.

University Classes

Places like Stanford and CMU have very good advanced masters-level classes ideal for starting PhD students. Slides and oftentimes lectures are online for the public. e.g., deep networks for NLP http://web.stanford.edu/class/cs224n/

See also Lex Fridman’s seminars up at https://deeplearning.mit.edu/ . Very good overview of capabilities and directions for a general overview.

Good Venues

Excellent tutorials are available recently at the major conferences, oftentimes with vidoes and/or slides on the website, although sometimes you have to hunt through the author’s webpages. The top conferences include AI&Stats, IJCAI, ICML, ACL … be warned, some tutorials are a bit specialised or advanced.

- https://nips.cc/Conferences/2018/Schedule?type=Tutorial

- https://icml.cc/Conferences/2019/Schedule

- tutorials are in pink up the top; e.g. Active Learning, Attention, Meta Learning

Machine Learning Summer School (MLSS)

This series is managed by venerable machine learning researchers and only has a few per year internationally. Their list of venues is at http://mlss.cc/ . You have to go to each and navigate disparate and sometimes wacky layouts to locate slides and/or videos.

- 2019 in London is high quality

- https://sites.google.com/view/mlss-2019/lectures-and-tutorials

- https://github.com/mlss-2019/slides

- see Shakir Mohamed’s VAE.pdf, more a checklist than a tutorial

- see Nowozin’s GAN tutorial

- 2019 Stellenbosch (Africa)

- 2018 Madrid

- Slides and videos here: http://mlss.ii.uam.es/mlss2018/speakers.html

- NOTE: some are earlier versions of above, so take the later one!

AutoML

The Freiburg-Hannover group has a great sequence of tutorials on AutoML and learning to learn:

- https://www.automl.org/events/tutorials/

- https://nips.cc/Conferences/2018/Schedule?showEvent=10979 the NeurIPS 2018 version with video

VideoLectures.net

An initiative of the Jozef Stefan Institute, Ljubljana, records many great tutorials, but coverage not as good recently. Go seaching for your favorite subjects:

- NLP and Deep Learning 1: Human Language & Word Vectors, by Chris Manning, 2015

- e.g., on the subject of “Machine Learning”

Others

- https://learningnets.github.io/KDD19_Tutorial/ KDD tute by a star-studded list , many slides there

- health informatics http://www.kmd.ovgu.de/KMD+Events/Data+Science+for+Health.html

- https://sites.google.com/view/deepdial/ conversational AI … the new wa

Machine Learning tutorial at ACSW 2020

February 5, 2020Australasian Computer Science Week is a collection of computer science events for Australian and New Zealand CS researchers. I’m giving a tutorial as part of their HDR/ECR programme on Machine Learning. Machine Learning has gone crazy in the last few years, growing exponentially and with ever vanishing publishing cycles, entering its own singularity I believe. So my slides are quite general and covering big issues rather than lots of detail. Its a longer talk so I’ll be skipping a few slides. I left some of the math in for interested readers that I wont cover much in the talk. As always, way too many perspectives and variations to pick from but I am focusing on probabilistic interpretations.

Interview with Monash Tech Talks team

December 16, 2019I did another interview, MCd by our Dean John Whittle and Dr. Catherine Lopes, again on AI and machine learning. This one was professionally organised with a green screen and in an official interview studio. I had to find a plain, non-green shirt, no stripes or patterns!

Prof. John Whittle, Dr. Catherine Lopes and Wray at an interview run by Monash Tech Talks at the Redback Conferencing facilities, 9th Dec 2019

Perspectives on Career Pathways

November 9, 2019

Wray with Adel Toosi at the ECR workshop, receiving some fabulous Aboriginal artwork.

I gave a talk to the Early Career Researchers in our faculty. They had held a workhop on career pathways. I showed them the crazy ride I’ve had (a so-called career path) and talked about things like “Know Your Strengths”, “Long Term Planning”, “Preparing for Industry” and “Preparing for More Research”. My slides (slightly updated) are available here. Adel put the original ones up on the Monash share drive.

Southeast Asia Machine Learning School

July 9, 2019Very fortunate to be asked to give a lecture on “Foundations of Supervised Learning” at SEA-MLS in Jakarta on 8th July. The school was co-organised by Google, so opening talk by Google and a member of the Indonesian government. A big crowd too! Everyones slides are up on the schedule page, and mine are copied here.

Never been to Jakarta so an exciting opportunity meet some colleagues, some students, in a lovely environment. Monash has a school at Malaysia so a few Malaysia Monash folks turned up too. Here we are after my lecture.

Monash Malaysia and Melbourne students at SEA-MLS.

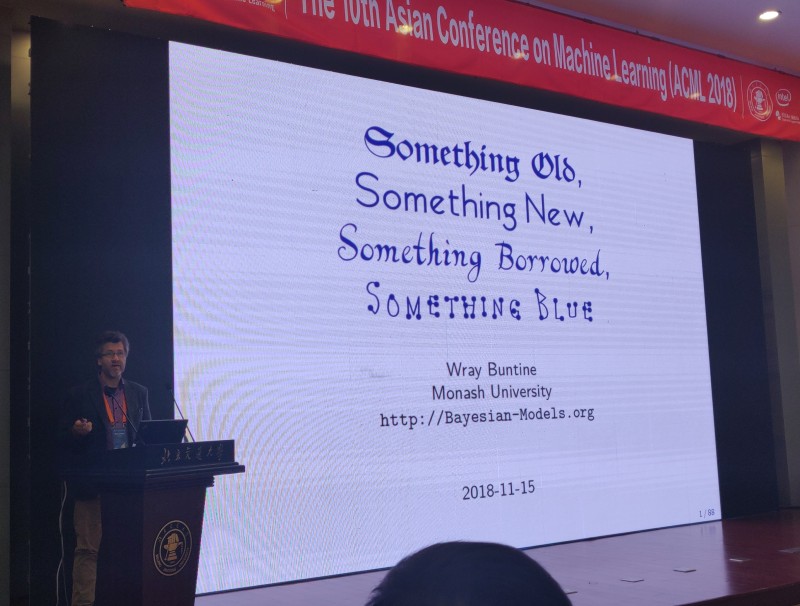

Invited talk at ACML in Beijing

October 11, 2018I’ve given an invited talk at ACML in Beijing November: see Invited Speakers at the ACML website.

I talked about the state of Machine Learning, contrasting the old with the new, and discuss where we may head next. Moreover, I gave some warnings about some problems we are currently facing. PDF slides for the talk are here. Abstract is given below. Prof. Jun Zhu (Tsinghua U.) has had some similar ideas so we conferred afterwards.

Several of us from Monash went: in the picture are Ye Zhu, Wray Buntine, Lan Du, Yuan Jin and He Zhao.

Something Old, Something New, Something Borrowed, Something Blue

Something Old: In this talk I will first describe some of our recent work with hierarchical probabilistic models that are not deep neural networks. Nevertheless, these are currently among the state of the art in classification and in topic modelling: k-dependence Bayesian networks and hierarchical topic models, respectively, and both are deep models in a different sense. These represent some of the leading edge machine learning technology prior to the advent of deep neural networks. Something New: On deep neural networks, I will describe as a point of comparison some of the state of the art applications I am familiar with: multi-task learning, document classification, and learning to learn. These build on the RNNs widely used in semi-structured learning. The old and the new are remarkably different. So what are the new capabilities deep neural networks have yielded? Do we even need the old technology? What can we do next? Something Borrowed: to complete the story, I’ll introduce some efforts to combine the two approaches, borrowing from earlier work in statistics.

ECML-PKDD talk on Bayesian network classifiers

September 14, 2018On the 14th September 2018 I presented the following paper at ECML-PKDD in Dublin. The slides for the talk are here.

We figured out how to do good smoothing of Bayesian network classifiers. The same technique works for decision trees, and in fact beats all known algorithms for smoothing/pruning!

“Accurate parameter estimation for Bayesian network classifiers using hierarchical Dirichlet processes”, by François Petitjean, Wray Buntine, Geoffrey I. Webb and Nayyar Zaidi, in Machine Learning, 18th May 2018, DOI 10.1007/s10994-018-5718-0. Available online at Springer Link. Presented at ECML-PKDD 2018 in Dublin in September, 2018.

Some research papers on hierarchical models

May 15, 2018“Accurate parameter estimation for Bayesian network classifiers using hierarchical Dirichlet processes”, by François Petitjean, Wray Buntine, Geoffrey I. Webb and Nayyar Zaidi, in Machine Learning, 18th May 2018, DOI 10.1007/s10994-018-5718-0. Available online at Springer Link. To be presented at ECML-PKDD 2018 in Dublin in September, 2018.

Abstract This paper introduces a novel parameter estimation method for the probability tables of Bayesian network classifiers (BNCs), using hierarchical Dirichlet processes (HDPs). The main result of this paper is to show that improved parameter estimation allows BNCs to outperform leading learning methods such as random forest for both 0–1 loss and RMSE, albeit just on categorical datasets. As data assets become larger, entering the hyped world of “big”, efficient accurate classification requires three main elements: (1) classifiers with low bias that can capture the fine-detail of large datasets (2) out-of-core learners that can learn from data without having to hold it all in main memory and (3) models that can classify new data very efficiently. The latest BNCs satisfy these requirements. Their bias can be controlled easily by increasing the number of parents of the nodes in the graph. Their structure can be learned out of core with a limited number of passes over the data. However, as the bias is made lower to accurately model classification tasks, so is the accuracy of their parameters’ estimates, as each parameter is estimated from ever decreasing quantities of data. In this paper, we introduce the use of HDPs for accurate BNC parameter estimation even with lower bias. We conduct an extensive set of experiments on 68 standard datasets and demonstrate that our resulting classifiers perform very competitively with random forest in terms of prediction, while keeping the out-of-core capability and superior classification time.

Keywords Bayesian network · Parameter estimation · Graphical models · Dirichlet 19 processes · Smoothing · Classification

“Leveraging external information in topic modelling”, by He Zhao, Lan Du, Wray Buntine & Gang Liu, in Knowledge and Information Systems, 12th May 2018, DOI 10.1007/s10115-018-1213-y. Available online at Springer Link. This is an update of our ICDM 2017 paper.

Abstract Besides the text content, documents usually come with rich sets of meta-information, such as categories of documents and semantic/syntactic features of words, like those encoded in word embeddings. Incorporating such meta-information directly into the generative process of topic models can improve modelling accuracy and topic quality, especially in the case where the word-occurrence information in the training data is insufficient. In this article, we present a topic model called MetaLDA, which is able to leverage either document or word meta-information, or both of them jointly, in the generative process. With two data augmentation techniques, we can derive an efficient Gibbs sampling algorithm, which benefits from the fully local conjugacy of the model. Moreover, the algorithm is favoured by the sparsity of the meta-information. Extensive experiments on several real-world datasets demonstrate that our model achieves superior performance in terms of both perplexity and topic quality, particularly in handling sparse texts. In addition, our model runs significantly faster than other models using meta-information.

Keywords Latent Dirichlet allocation · Side information · Data augmentation ·

Gibbs sampling

“Experiments with Learning Graphical Models on Text”, by Joan Capdevila, He Zhao, François Petitjean and Wray Buntine, in Behaviormetrika, 8th May 2018, DOI 10.1007/s41237-018-0050-3. Available online at Springer Link. This is work done by Joan Capdevila during his visit to Monash in 2017.

Abstract A rich variety of models are now in use for unsupervised modelling of text documents, and, in particular, a rich variety of graphical models exist, with and without latent variables. To date, there is inadequate understanding about the comparative performance of these, partly because they are subtly different, and they have been proposed and evaluated in different contexts. This paper reports on our experiments with a representative set of state of the art models: chordal graphs, matrix factorisation, and hierarchical latent tree models. For the chordal graphs, we use different scoring functions. For matrix factorisation models, we use different hierarchical priors, asymmetric priors on components. We use Boolean matrix factorisation rather than topic models, so we can do comparable evaluations. The experiments perform a number of evaluations: probability for each document, omni-directional prediction which predicts different variables, and anomaly detection. We find that matrix factorisation performed well at anomaly detection but poorly on the prediction task. Chordal graph learning performed the best generally, and probably due to its lower bias, often out-performed hierarchical latent trees.

Keywords Graphical models · Document analysis · Unsupervised learning ·

Matrix factorisation · Latent variables · Evaluation

Research Career Perspective

March 23, 2018I was asked to do a general review of my research over the last decade or two, or three, or four … Gawd how long is it. Lets just say I remember loading punch cards and tape drives, and being the first guy in the computer science class to use a … wait for it … a full screen edit called “vi” … because when I started everyone used a line editor called “ed”. Students still remark how I effortlessly switch between “vi”, “emacs”, and “perl -pi -e” during work. Not sure that’s good.

So the slides for the talk are here. There is a lot more I could have said.